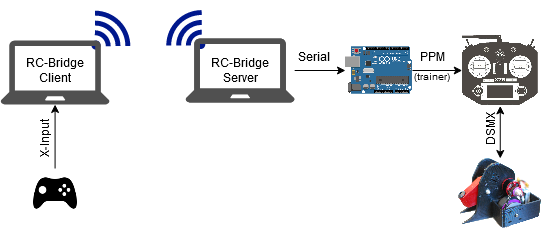

Building off the Trainer Protocol used by the DX6i (relevant post here), I wanted to see if I could control a model over the Trainer Protocol from my computer. I started by deciding what basic data I wanted to send to the controller, and coming up with a frame format for that.

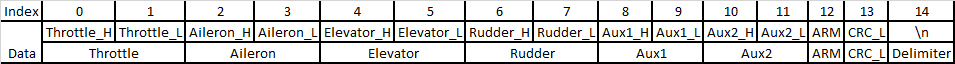

I decided early on that I wanted to just send the raw ppm channels 1-6, a control/arm byte, and a crude CRC. For most robots I only really need 3 channels (drive left + right + weapon/actuator), but adding in the other 3 channels on a standard TX opens up some control/mix options. I wanted the "state" of the generated ppm to be expandable (beyond arm/disarm) so I dedicated a full byte to that. A rough redundant check (just lower sum) and delimiter are added to make sure the data is consistently received.

Here's the Frame Format:

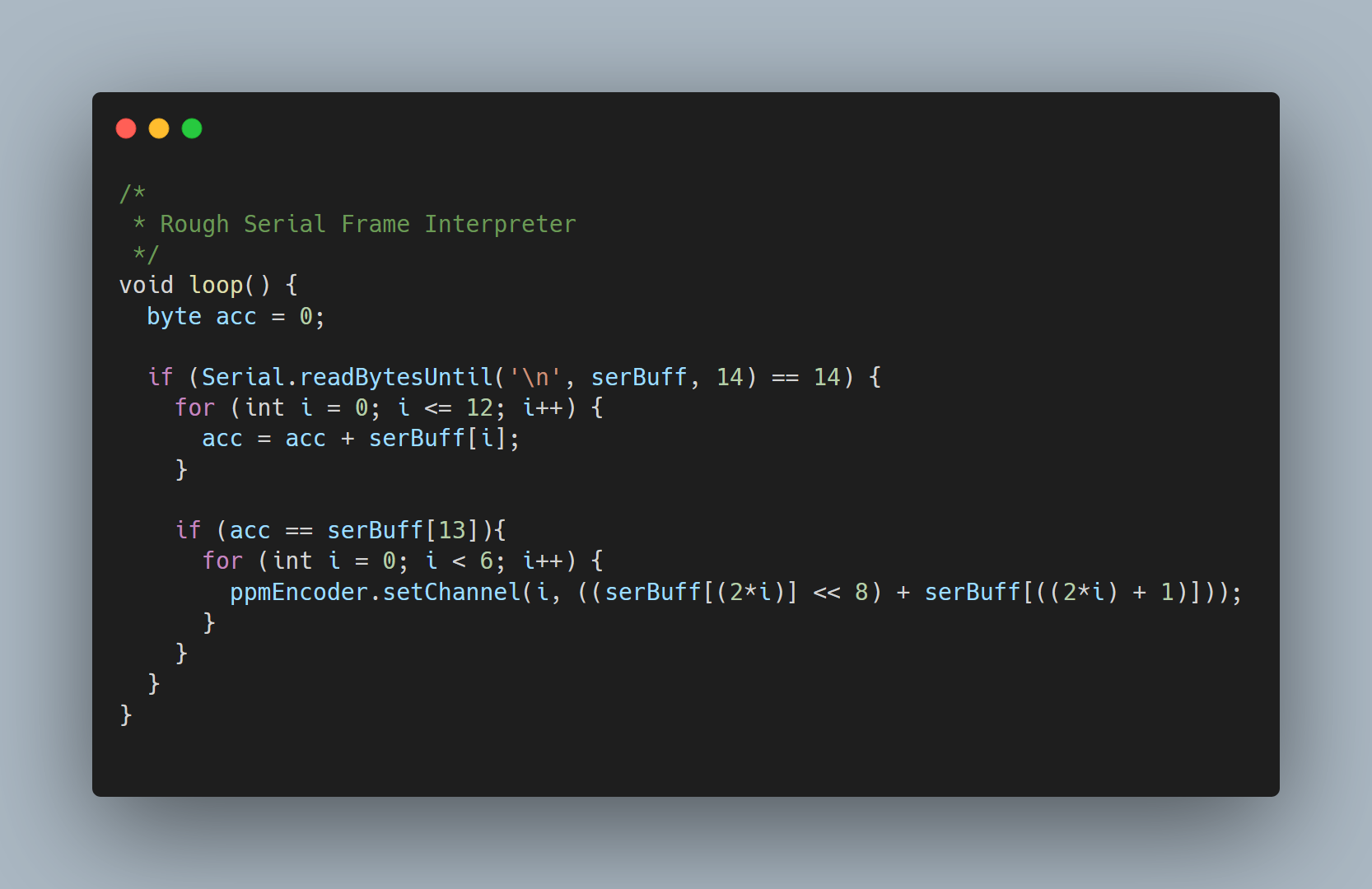

With a rough frame format in mind, I was able to write a really basic Arduino interpreter and Java interface. Both leverage existing libraries, and are pretty simple to extend/modify.

To actually control the robot, I wanted to use a game controller (they have similar controls, and Game Controllers are pretty well documented/implemented). I ended up picking up a Logitech F310, they're pretty cheap and support both DirectInput and X-input (as well as different mapping "modes").

For actually mapping the controller, I wrote a crude Swing GUI that hooks all input devices. I really wanted to avoid hard-coding mappings/controllers, and I assumed someone might come up with some fun control schemes (I guess you could use mouse + keyboard if you were determined to).

The gamepad mapping is serialized and written to a file using Google's Gson (json encoder/decoder) once the mappings are confirmed.

But that's only half the problem, we still need to get to PPM. Ideally, we'd also have some form of mixing/scaling too (so we don't need to use a mixer on the robot). Spektrum appears to use a range of 1100-1900 us with a midrange value of ~1500us, this seems to be dependent on the radio though (see pascallanger/DIY-Multiprotocol-TX-Module/TX_Def.h for some example ranges).

So, another crude GUI and a few more Objects were born. The mixing is very, very simple but should be enough for basic robots (and yet again, can be tweaked later). The GUI hooks the trimmed/scaled values from the Gamepad Mapping and provides the calculated PPM values in real time.

The TX Mixes are serialized and written to a file in the same way as the gamepad mappings.

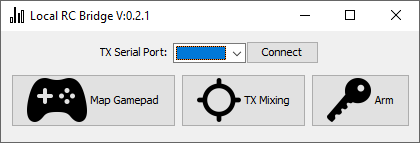

For testing, these were packaged with yet another crude Swing GUI and a bit of glue logic for a "local bridge" mode.

This worked pretty well as a proof of concept, and had pretty minimal input latency (I think a lot of this is due to the relatively low refresh rate of DSM2 at ~22ms). If someone just wanted to use a different controller with their RC Models, something as simple as Local Bridge mode works great.

I had loftier goals though. The way I saw it, there's no reason the Gamepad and Transmitter have to be on the same computer. Or in the same house.

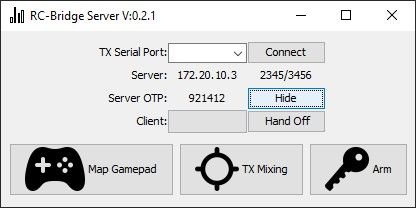

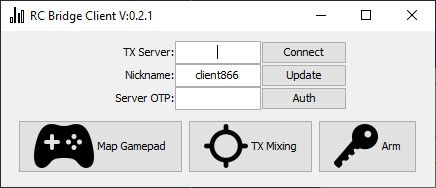

To do this, I implemented a very simple UDP server/client in Java with a basic packet structure. Two ports are used for communication between the client/server, and an OTP is used to authorize new clients. Once a client is authorized, the server can "Hand-off" controls to the client. Gamepad and Mixing is handled by who is in control (the "handed-off" client or server).

Yet more GUI's:

And a quick test (with less than ideal iPhone Hotspot + VPN for networking):

As it turns out, the hardest part of RC Control over the internet is getting an ultra low latency stream of the robot. Most livestreaming platforms (Twitch, Youtube, etc.) seem to have 5+ seconds of round trip latency, which makes control very difficult. There are (expensive) enterprise solutions, but on the cheap end WebRTC based video calls seem to be "good enough". From my testing Zoom seems to be ~100-150ms latency which is an order of magnitude better than Twitch/Youtube, but still doesn't feel great. If anyone has any suggestions on this end of things, please let me know.

For more info about the project, check out the Gitlab Repo here.